Evaliphy

Test Your AI Features Like The Rest Of Your Product

★★★★★0.0(0 reviews)

1Launched Apr 2026

What is Evaliphy?

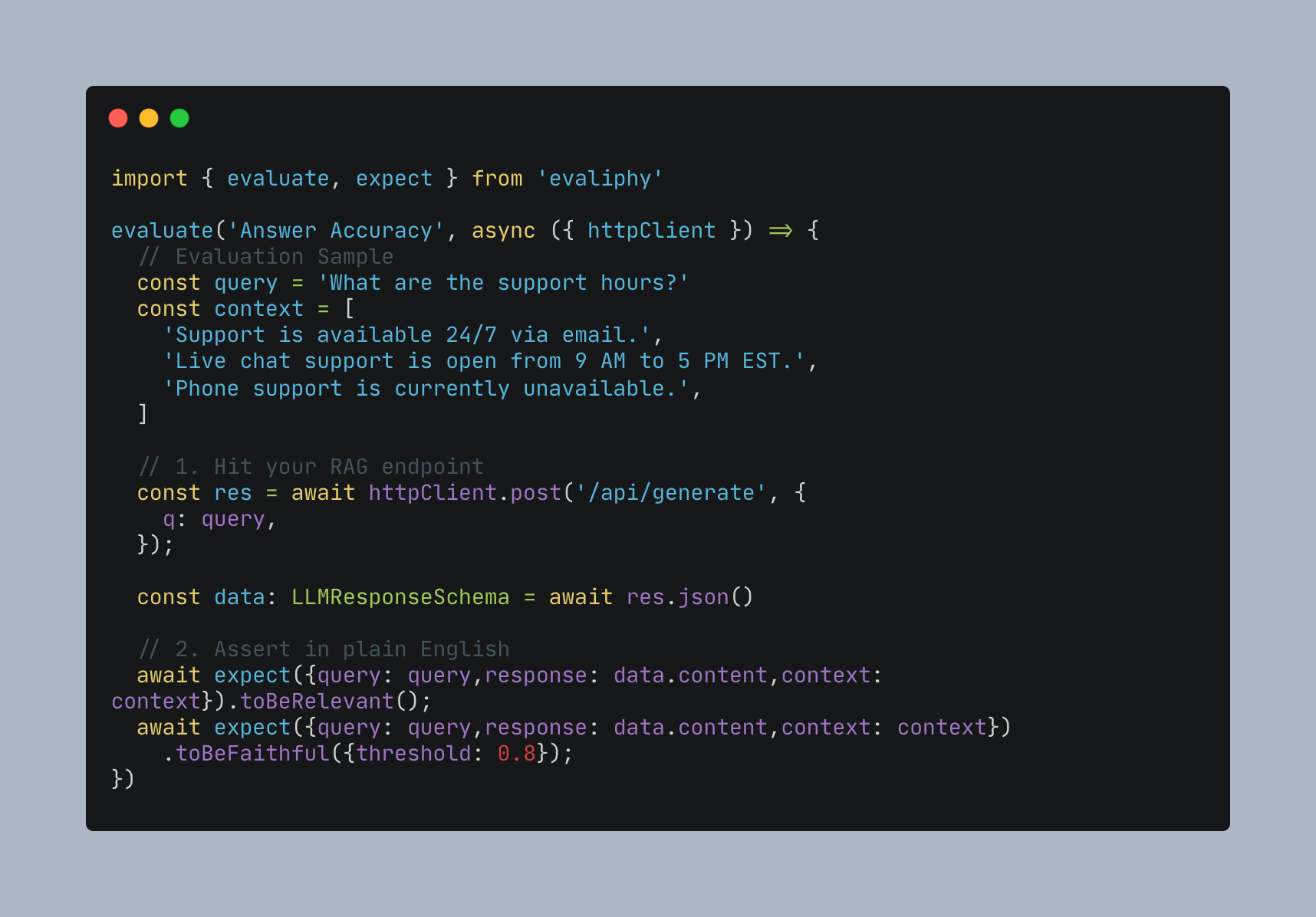

Evaliphy is an AI evaluation framework that treats your AI system as a black box. Write assertions against your real API, get structured results, and catch regressions in CI — without touching internals of AI system or writing prompt engineering from scratch. Built-in LLM-as-Judge assertions handle the hard parts. You focus on writing evaluations, not wiring up models.

What it solves

- Simplifies the AI System Testing

- Existing tools require considerable Machine Learning knowledge

- Enables QA Engineers to write end-to-end tests for AI systems

- AI quality is tested by the people who built it not independently

How it works

- Write assertions in TypeScript against your real running API

- Built-in LLM-as-Judge scores responses — no prompt writing needed

- Runs in CI like any other test suite — fails the build on regressions

- HTML, JSON, Excel reports your whole team can read and act on

Key Features

AI Eval

AI System Testing

E2E Testing for AI

AI Feature Testing

AI Test Automation

RAG Eval

RAG Testing

Integrations

GithubJenkinsBamboo

Meet the Team

PS